Abstract

One of the promising areas of development of countries in the twenty-first century is the growth and application of new novel technologies, among which artificial intelligence is given priority. At the national level, the strategies are being evolved and carried out. Introducing artificial intelligence into the companies and organizations' operations and into the government activities as a whole, the creation of new artificial intelligence products is pivotal, according to these policies. Many countries are fighting hard for the leading place in the process of introducing artificial intelligence into everyday life, among them the USA, Germany, the United Arab Emirates, Great Britain, China, etc. Russia is no exception. For the development of artificial intelligence technologies, strategies are being shaped, new standards are being adopted that reflect the nature of the technology. At the same time, it is important to determine the degree of impact of artificial intelligence technologies on various areas, one of which is social media, banding the users from all over the world. It is also necessary to mark what kind of technology is used on this or that social media platform. To obtain the most complete and objective data a sociological survey was conducted among the population of various countries, which made it possible to form the public's position regarding the use of artificial intelligence technologies on social networks.

Keywords: Artificial intelligence, digitalization, legal regulation, self-regulation, social media, technology

Introduction

Currently artificial intelligence is given top priority among all the resources where it is used. One can confirm this fact by the volume of investments that are elaborated on the base of artificial intelligence technologies. In 2020 it grew by 40 %, reaching $ 67.9 billion. The certain part of private investments in the sphere of developing artificial intelligence technologies in 2020 showed an increase of 9.3 %, exceeding $ 40 billion (Artificial Intelligence…, n.d.). The number of areas where artificial intelligence technologies are beginning to be used is becoming larger. In this regard, besides the investments in the advancement in artificial intelligence technologies, countries are implementing a certain policy aimed at putting these technologies in order, which, today, is beginning to assume a more distinctive character.

Problem Statement

Awareness of the necessity of legal regulation of AI technologies is gradually being realized, while its correlation with Internet is increasingly strengthened, manifesting itself from various angles. However, one can stress some points that will make certain difficulties in the legal regulation of AI technologies; one of them is the lack of full information for the legislator on such kind of technologies, as well as on their capabilities. In this case, it is necessary the law be clear and elaborated (Stepanov, 2018, p. 9). At the same time, the current level and nature of the science-based comprehension of this phenomenon is behind the legal practice needs and the processes of updating legal matter.

Ilya Durnitsyn, in his turn, concludes that the definition of AI is modeled in such a way as to cover the largest possible spheres of activities for the future development of AI, but not to give a clear and concise explanation of the basic concept from a technical point of view (as cited in Artificial intelligence and law:…, n.d.).

Research Questions

Speaking about the legal basis for regulating AI technologies, the position papers have already been drafted in many countries: China has "New Generation Artificial Intelligence Development Program". This is a strategic government program for the AI sphere development until 2030. The United States has “National Strategy in the Sphere of Artificial Intelligence”. The UAE and Saudi Arabia adopted “Vision 2030” growth strategies in 2019, which was based on artificial intelligence. In Germany since 2018 the government took the policy aimed at developing artificial intelligence until 2025. The UK published “Industry Strategy for Artificial Intelligence” in 2018. In Russia the “National Strategy for the Development of Artificial Intelligence for the Period up to 2030” was approved in 2019.

Strategies analysis and other documents devoted to AI technologies allows us to mark the specific features that are common for the most of them. These are provisions regarding the assimilation of technologies in every area both of social life and the life of the state as a whole; finding promising ways of developing AI; adapting the regulatory framework; providing necessary infrastructure; maintaining the economy and national security of the country, etc. However, in social media, AI has both positive application due to self-regulation and negative impacts that require government control.

The first is related to improving the service for users. AI systems allow communicating with users in the form of bots and virtual influencers (Kuvshinova, 2019, p. 85). Face recognition technology is widely applied, for example, automatic search for friends in photos, restoration of access to a user account. Vkontakte also uses this technology to protect users from document leakage, passport photos are automatically excluded from public access. AI technologies can automate handholding and moderation functions. AI technologies are an effective tool for solving a wide range of problems (Kharitonova & Savina, 2020, p. 525), but its use also may result in negative implications.

As a negative impact of applying AI in social media, there is the phenomenon of “filter bubble” and “echo chamber” – forming social networks that systematically exclude sources of information. Another danger of using AI technologies in social media is the possibility of manipulation. The user's digital footprint is the basis for the operation of both recommendation systems and other algorithms that reveal additional, hidden information: for example, about psychological characteristics, income, marital status, etc. The ability of AI systems to find non-obvious, hidden connections between objects, phenomena can be used for commercial, political or criminal purposes.

Facebook removed the CambridgeAnalytica account and closed the ability to collect user and friend profiles data through third-party applications, but both the network and its partners have access to the info and, therefore, the ability to manipulate (Facebook Uses Artificial Intelligence …, n.d.). Social media first analyze the psychological profile of the user, and then supply him or her with the information that provides a means of successful selling goods and services.

So, we can suggest the growth of human vulnerability in the Internet communication area (Pastukhov & Losavio, 2017, p. 240). The peculiarities of organizing communications in social networks can provoke a massive impact of Internet on the psyche of individuals and give rise to a new type of addiction – Internet addiction. Using AI technology for creating more palatable social media services can result in holding a user for longer time on the sight, thereby aggravating his or her addiction. In that vein, the issues concerning ethical principles, cultural norms and values that specify the development and application of AI systems are becoming relevant (Malyshkin, 2019, p. 445).

Purpose of the Study

The purpose of the study is to substantiate the limits of self-regulation and state regulation of the use of AI in social media, based on the practice of using and evaluating users and the scientific community.

Research Methods

The methodological basis of research consists of the following methods of scientific knowledge: general scientific, including analysis, synthesis, induction, deduction. Along with this, the work uses proper legal methods, such as technical and comparative-legal. The systematic method of researching issues is the most important, since it is associated with AI, its interaction was used with social media and to identify trends in technology development. This methodology provided a holistic view of AI technology and its relationship to social media sites.

Findings

To obtain the most complete data for the analysis of the set goals, we conducted a sociological survey aimed at identifying the attitude of the population towards AI.

Thus, the researchers conducted a survey of users of social media in order to identify the users’ opinions about research topic. The interview was conducted anonymously using the Google service. See https://forms.gle/ZMhZF3buoUsL2Em98.

The object of the research was 297 respondents. The sampling method was formed according to age and gender criteria.

The survey was completed: 65.3 % of respondents aged 21 – 39 years, 29 % – from 15 to 20 years old and 5.4 % – from 40 years old and older; 51.2 % – women and 48.8 % – men.

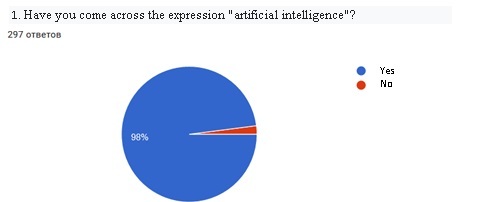

As the survey results show, almost all respondents (98 %) are familiar with the expression of AI (Figure 1).

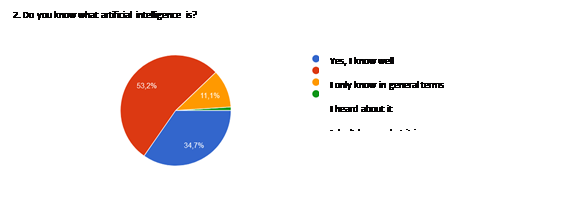

Despite the fact that the overwhelming majority of the respondents have seen the expression "artificial intelligence", we can say that the overwhelming majority do not understand its essence well enough.

Only 34.7 % of respondents know well what it is. Most of them only know the title and do not know about the essence – 53.2 % know about it only in general terms, 11.1 % have heard about it and 1 % do not know the essence of AI at all (Figure 2).

Regarding the negative impact of AI on social media, the respondents were asked to formulate their own answer.

All the negative consequences suggested by the respondents can be divided into social aggregates: invasions of users of social media sites – 13.4 %; the technologies using AI – 8.4 %; failures in work – 8 %; human factors – 6.4 %; government regulation of the use of AI in social media sites – 5.4 %; advertising distribution – 2.4 %.

First, according to the respondents, the negative consequences associated with invasions of users of social media are in violation of the privacy policy, unauthorized distribution of personal data of users, remote fraud, hacking of social media and the use of AI by users for personal purposes.

AI technologies cause concern among respondents because of the complexity of using the technologies themselves, incorrect interpretation of AI of some user actions, “deception” of AI, inability to recognize individual commands, phrases, which may entail a large waste of time or their usage error.

According to the respondents, AI is devoid of "humanity", which can lead to a certain automaticity of the technology, which does not take into account the individuality of the personality, its behavior, etc. In addition, as negative consequences, the respondents noted that AI could affect the personality itself, its outlook. If a person will stop thinking, the desire to do something will disappear; the skills of critical thinking will be lost.

As for governmental control, the majority of respondents indicated that the state with the help of AI would be able to control and monitor the users of social media sites, as well as manipulating public opinion.

75.8 % of respondents believe that legal regulation of the use of AI by the government should be carried out. 10.1 % of respondents answered this question negatively.

Among the options offered to respondents, the answers about the positive influence of AI at social media were follows. A social media will allow self-learning, suggest interesting topics for hobbies – 48.8 %. The privacy policy in social media sites will improve – 43.4 %. Communication in social networks will acquire a higher quality level (absence of insults, bullying and other negative elements of communication) – 41.4 %. Copyright content will be protected – 40.7 %. The social media will self-regulate – 38.7 %; information posted on social media accounts will be reliable – 25.9 %; users’ identification in social media platform – 25.6 %.

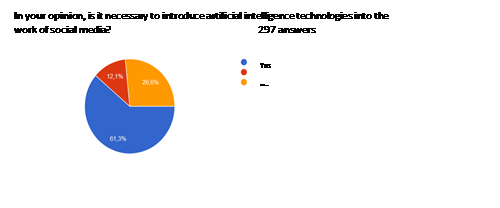

The majority of respondents considered the research topic relevant and showed interest in it, the analysis of the next question showed that 61.3 % agree that AI should be introduced into social media sites (Figure 3).

Conclusion

In order to counteract, it is necessary to familiarize society with new threats, to instruct citizens carefully to contact with people in social media sites. The way out could be the certification of public events, which confirms the reality of the information, posted about them. Of course, the task of technical specialists will then be to protect the event databases and the mechanism for their certification.

References

Artificial Intelligence (Global Market) (n.d.). https://www.tadviser.ru/index.php/-Статья:Искусственный_интеллект_(мировой_рынок)#2021:_Gartner:_5_.D0.BF.D1.80.D0.BE.D0.B3.D0.BD.D0.BE.D0.B7.D0.BE.D0.B2_.D1.80.D0.B0.D0.B7.D0.B2.D0.B8.D1.82.D0.B8.D1.8F_.D1.80.D1.8B.D0.BD.D0.BA.D0.B0_.D0.98.D0.98

Artificial intelligence and law: is there contact? (n.d.). http://www.garant.ru/news/1401154/

Facebook Uses Artificial Intelligence to Predict Your Future Actions for Advertisers, Says Confidential Document (n.d.). https://theintercept.com/2018/04/13/facebook-advertising-data-artificial-intelligence-ai/

Kharitonova, Yu. S., & Savina, V. S. (2020) Artificial intelligence technology and law: modern challenges. Bulletin of Perm University. Legal sciences, 49, 524–549.

Kuvshinova, D. D. (2019). Virtual influencer as an advertising tool: genesis, characteristics, functions. In Communications have the epoque of numerical transformation (pp. 84–87). L’Harmattan.

Malyshkin, A. V. (2019). Integration of artificial intelligence into public life: some ethical and legal problems. Bulletin of St. Petersburg University. The Law, 3, 444–460.

Pastukhov, P. S., & Losavio, M. (2017). Using information technologies to ensure the security of the individual, society and the state. Bulletin of Perm University. Legal sciences, 36, 231–236.

Stepanov, O. A. (2018). On the problem of concretization of law in the context of digitalization of public practice. Law. Journal of the Higher School of Economics, 3, 4–23.

Copyright information

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

About this article

Publication Date

31 January 2022

Article Doi

eBook ISBN

978-1-80296-121-8

Publisher

European Publisher

Volume

122

Print ISBN (optional)

-

Edition Number

1st Edition

Pages

1-671

Subjects

Civilistic Doctrine, Digital Transformation, Sociocultural Transformations, Philosophy of Law, Public Authorities

Cite this article as:

Kovaleva, N. N., Anisimova, A. S., Tugusheva, Y. M., & Danilova, M. A. (2022). Artificial Intelligence And Social Media: Self-Regulation And Government Control. In S. Afanasyev, A. Blinov, & N. Kovaleva (Eds.), State and Law in the Context of Modern Challenges, vol 122. European Proceedings of Social and Behavioural Sciences (pp. 347-352). European Publisher. https://doi.org/10.15405/epsbs.2022.01.56