Abstract

Threat and error management (TEM) is considered a key responsibility of pilots, flight instructors and flight examiners. This study presents a new model for teaching threat and error management related to flight instruction that is based on the system-theoretic process analysis (STPA) (

Keywords: Threat and Error ManagementTEMSTPAFlight InstructionPilotSafety

Introduction

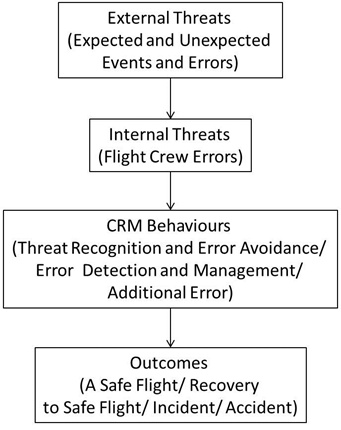

Threat and error management (TEM) is considered a key ability of pilots, flight instructors and flight examiners (ACG, 2014; EASA, 2011). Helmreich, Klinect and Wilhelm (1999) proposed a model of TEM which is focused on the front line operators. According to the original TEM model, the flight crew is responsible to manage expected or unexpected external threats and errors committed by other operators, to avoid committing additional errors or to manage their own errors, too (Figure

Using this linear model of TEM, Klinect (2005) investigated the TEM process at 10 airlines. He found an impressive number of 7,257 errors during 2,612 observation flights. The flight crews managed most of the errors and threats. Nevertheless, the crew could not detect and manage 27% of errors on time. In 1,347 cases the TEM process resulted in a hazardous flight status. This data is extremely important for raising awareness of the “statistical normality” of threats and errors in the daily activity of pilots who are front line operators of the aviation industry.

Problem Statement

The original model of TEM describes the inputs and outputs of TEM, but does not specify the process in between. Insight into the TEM process can aid training organizations in analysing hazardous processes and developing meaningful exercises. Furthermore, the original model of TEM (Helmreich et al., 1999) is focused on the pilots, as the main players in threat and error management and disregards other key players that more or less directly contribute to the process. It is very likely that the threats and errors encountered by the pilots are symptoms of deeper trouble in the system that could be resolved at another level. As system safety theorists and practitioners showed, the management, regulatory agencies, industry associations or even the government play a major role in shaping the work of front line operators (Dekker, 2006, 2011; Leveson, 2011; Koglbauer & Leveson, 2017; Rasmussen, 1997).

The goal of a flight training course is to train candidates to the level of proficiency necessary to pass the assessment of competence for the required license/ rating. The outcome of TEM in flight training can be a safe flight, recovery to a safe flight or the occurrence of an incident or accident. An accident is an event that leads to injury, loss of life, damage of aircraft or property (Leveson, 2011). According to Rasmussen (1997) safety depends on the control of work processes for avoiding accidental harm. Accidents occur because safety constraints are not appropriate or are not properly enforced throughout the whole system, not only at the front line of operation. For avoiding accidents, hazards need to be controlled. A hazard is a “system state that, together with a particular set of worst-case […] conditions, will lead to an accident” (Leveson, 2011, p. 184). According to Leveson (2011) the safety is an emergent hierarchical system property, not a component property. Koglbauer (2016, 2017) proposed a preliminary system-theoretic model of TEM in flight instruction. The role that company management, regulatory agencies, industry associations or government can play in TEM needs further consideration and will be addressed in this paper.

Research Questions

This study outlines a generic model of the system involved in threat and error management in flight training.

Purpose of the Study

This study presents an update of the system-theoretic model of TEM in flight instruction (Koglbauer, 2016, 2017). The system-theoretic model presented here addresses the higher-level controllers in the hierarchy of the aviation system such as company management, authorities and industry associations that shape the interactions among flight instructor, trainee, automation and environment.

Research Methods

The System-Theoretic Accident Model and Process (STAMP) developed by Leveson (2004; 2011) is at the core of STPA (Leveson, 2011). STPA can be used to model a sociotechnical system, identify unsafe control actions and safety constraints that can be enforced at different hierarchical levels of the system. Using STPA the process can be modelled using control loops. The controllers can be people (e.g. flight instructors, trainees, pilots, inspectors, managers, regulators) or automated (e.g., autopilot). The conditions necessary to control a process in a given time and space are the existence of clear goals, the reception of information/ feedback about the process, the knowledge and the ability to influence the process (Leveson, 2011). In addition, STPA enables the analysis of multiple controller hazards.

Findings

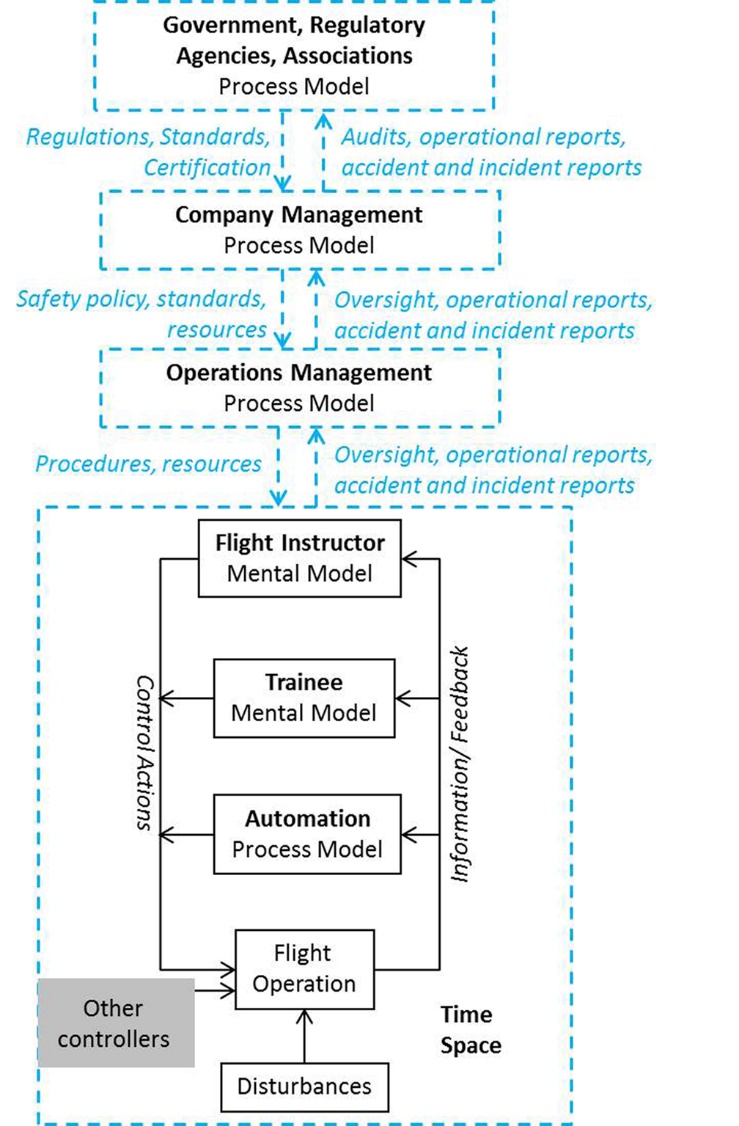

The generic system involved in flight training and modelled according to STPA is illustrated in Figure

At the company level the regulations are interpreted and implemented. The organization or company management establishes the operations management for flight training, and provides resources, policies and oversight of the activity. The CAA conducts investigations and audits of the certified training organization of the company. The CAA can also amend, limit, suspend or revoke the company’s certificate when the conditions according to which it was issued are no longer fulfilled. The operations management is responsible for development and implementation of training programs, staff planning, scheduling, monitoring, record-keeping, and reporting. Finally, at the bottom level we meet the flight instructors and trainees involved in the productive process. The flight instruction work is monitored and shaped by externally given requirements and resources. Time and space are critical features of the process model.

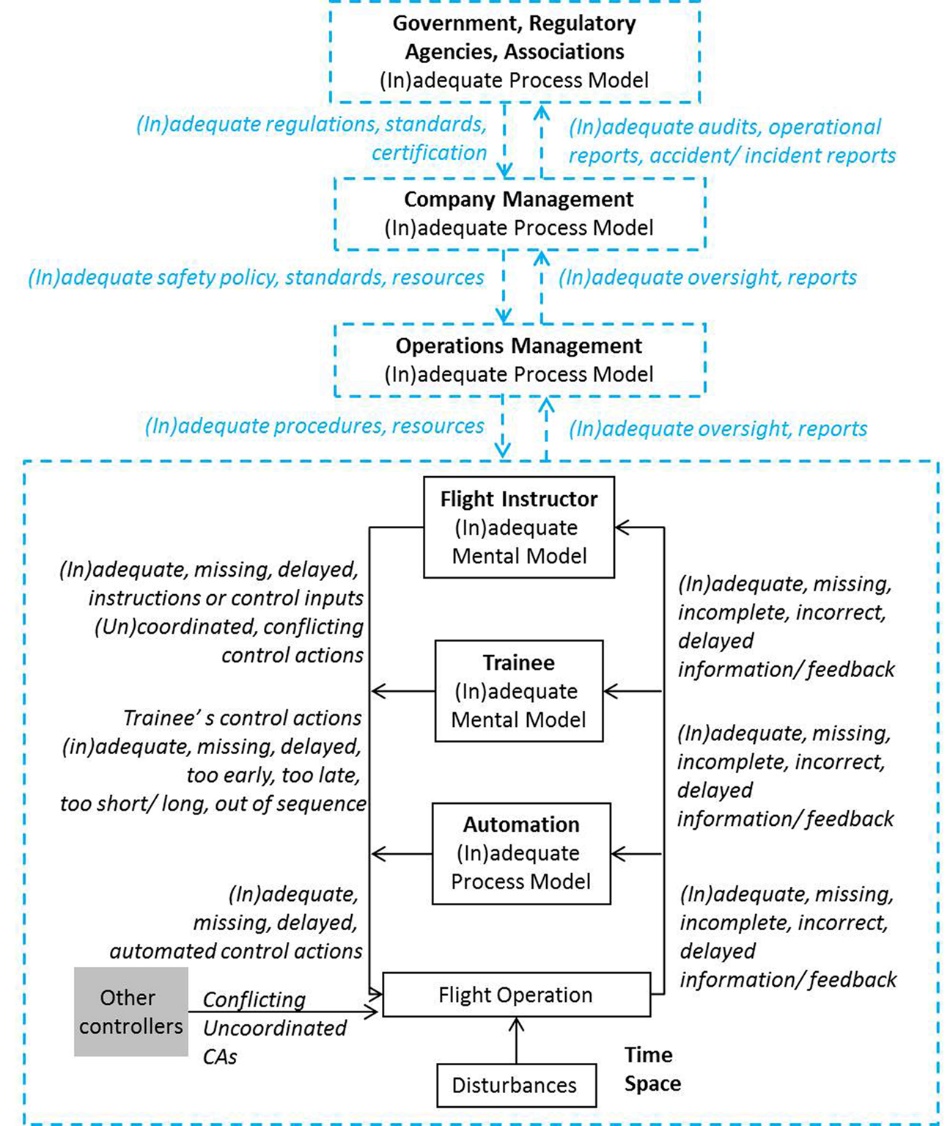

Using the STPA-methodology (Leveson, 2011) generic unsafe control actions (UCAs) can be systematically determined for each hierarchical level and controller/ agent. A set of generic control actions and UCAs related to flight instruction are listed in Table

When two or more controllers are performing CAs, the outcome may be unsafe because of conflicts or incoordination among controllers. For two controllers, four typical cases can be determined (Ishimatsu, Leveson, Fleming, Katahira, Miyamoto & Nakao, 2011; Leveson, 2011):

Only one safe control action is provided. For example, when the company management provides appropriate standards but the operations management does not provide the necessary resources to the employees for implementing the standards; or when the operations management reports safety problems and the company management does not addresses them (Ioannou et al., 2017);

Multiple safe control actions are provided resulting in excessive inputs (e.g., additive stick inputs of the flight instructor and of the trainee);

Both safe and unsafe control actions are provided (e.g., the international associations provide adequate recommendations, but the national authorities implement them inadequately);

Only unsafe control actions are provided. For example, when the legislation does not implement a just culture (Janezic, 2016) the instructors who should report incidents or errors may fear unfair treatment and do not report them. If errors and incidents are not addressed, the people and organizations cannot learn from them and safety problems are repeating.

Using the STPA-methodology (Leveson, 2011) causal scenarios for the identified unsafe control actions can be determined by examining the parts of the control loops: information/ feedback, knowledge (mental or process models), control actions, time, space and disturbances. For determining threats and errors related to flight training the generic control structure illustrated in Figure

Conclusion

This paper proposes a generic model of threat and error management for flight instruction based on the System-Theoretic Process Analysis (STPA) (Leveson, 2011). The new model shows that not only the pilots/ instructors, but each control instance, at each hierarchical level of the system can contribute to threat and error management in flight instruction. Thus, the people from every control instance of the hierarchy can be better prepared to understand the system, to anticipate and deal with hazards related to their work. The STPA-based model of TEM can be used for safety training, instructor training, for developing requirements, standards, for hazard analysis and prevention of instructional incidents and accidents. Future work with the STPA-based TEM model will focus on refining the causal scenarios and developing instructor training programs. The model can be also used as a frame for incident/ accident investigations and elaboration of safety requirements for preventing future accidents.

Acknowledgments

Preliminary versions of this study have been presented in the 2016 European Proceedings of Social & Behavioural Sciences (Koglbauer, 2016), and at the STAMP Workshop organized by the MIT Partnership for a Systems Approach to Safety, on March 30, 2017 at the Massachusetts Institute of Technology, Cambridge, MA (Koglbauer, 2017).

References

- ACG AustroControl (2014). Flight Examiner‘s Manual for Aeroplanes and Helicopters. Doc. HB LSA PEL 002, Version 5.0.

- Dekker, S. (2006). The field guide to understanding human error. Surrey, England: Ashgate.

- Dekker, S. (2011). Drift into failure. From hunting broken components to understanding complex systems. Surrey, England: Ashgate.

- EASA (2017). Mission. Retrieved August 10, 2017 from https://www.easa.europa.eu/the-agency/the-agency, 15:30.

- EASA (2011). Acceptable Means of Compliance and Guidance Material to Part FCL.

- EUROCONTROL, & FAA (2008). Safety Culture in Air Traffic Management. A white paper.

- Helmreich, R. L., Klinect, J. R., & Wilhelm, J. A. (1999). Models of threat, error, and CRM in flight operations. In R. Jensen (Ed.) Proceedings of the 10th International Symposium on Aviation Psychology. Columbus, OH: The Ohio State University, 677-682.

- ICAO (2017). Safety Report. Montréal, Canada: International Civil Aviation Organization.

- IFALPA (2017). IFALPA’s Mission. Retrieved August 10, 2017 from https://www.ifalpa.org/, 15:03.

- Ioannou, C., Harris, D., & Dahlstrom, N. (2017). Safety Management Practices Hindering the Development of Safety Performance Indicators in Aviation Service Providers. Aviation Psychology and Applied Human Factors, 7(2), 95-106. DOI: 10.1027/2192-0923/a000118

- Ishimatsu, T., Leveson, N., Fleming, C., Katahira, M., Miyamoto, Y. & Nakao, H. (2011). Multiple controller contributions to hazards. Conference of the International Association for the Advancement of Space Safety, Versailles, France.

- Janezic, J.J. (2016). Just Culture im Österreichischen Luftfahrtrecht de lege lata et de lege ferenda [Just Culture in The Austrian Aviation Law de lege lata et de lege ferenda]. Master Thesis. Graz, Austria: Graz University of Technology.

- Kearns, S.K., & Schermer, J.A. (2017). Survey of attitudes toward aviation safety management system (SMS) training. Aviation Psychology and Applied Human Factors, 7(1), 1-6. DOI: 10.1027/2192-0923/a000109

- Klinect, J.R. (2005). Line Operations Safety Audit: A cockpit observation methodology for monitoring commercial airline safety performance. Dissertation Thesis. Austin, Texas: University of Texas.

- Koglbauer, I. (2016). A System-Theoretic Model of Threat and Error Management in Flight Instruction. In V. Chis & I. Albulescu (Eds.) The European Proceedings of Social & Behavioural Sciences, 241-248.

- Koglbauer, I. (2017). STPA-based Model of Threat and Error Management in Dual Flight Instruction. 2017 STAMP Workshop, MIT Partnership for a Systems Approach to Safety, March 30, Massachusetts Institute of Technology, Cambridge, MA.

- Koglbauer, I., & Leveson, N. (2017). System-Theoretic Analysis of Air Vehicle Separation in Visual Flight. In M. Schwarz & J. Harfmann (Eds.), Proceedings of the 32nd Conference of the European Association for Aviation Psychology (pp. 117-131). Groningen, NL: European Association for Aviation Psychology.

- Leveson, N. (2011). Engineering a safer world. Cambridge, MIT Press.

- Leveson, N.G. (2004). A new accident model for engineering safer systems. Safety Science, 42, 237-270.

- Rasmussen, J. (1997). Risk management in a dynamic society: a modelling problem. Safety Science, 27(2–3), 183-213.

- Sterman, J.D. (2000). Business Dynamics. Systems thinking and modelling for a complex world. Boston, U.S.A.: The McGraw-Hill Companies, Inc.

Copyright information

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

About this article

Publication Date

28 June 2018

Article Doi

eBook ISBN

978-1-80296-040-2

Publisher

Future Academy

Volume

41

Print ISBN (optional)

-

Edition Number

1st Edition

Pages

1-889

Subjects

Teacher, teacher training, teaching skills, teaching techniques, special education, children with special needs

Cite this article as:

Koglbauer, I. (2018). Threat And Error Management Revisited. In V. Chis, & I. Albulescu (Eds.), Education, Reflection, Development – ERD 2017, vol 41. European Proceedings of Social and Behavioural Sciences (pp. 655-662). Future Academy. https://doi.org/10.15405/epsbs.2018.06.78