Abstract

The study focuses on the role of social media in pushing subjects to suicides among the age group of 15-29 in Russia where the prevalence of suicides remains among the highest in the world (30th place as of 2017). There was an assumption that “death groups” with suicidal games in social media could be a leading cause of suicides. The primary research was conducted from January 2016 to February 2018 on all accounts of the social media Vkontakte, Facebook, Twitter, Instagram, Ask.fm, Ответы.mail, Youtube, Odnoklassniki, Russian-language blogs and forums. The study was divided into two parts: automated and manual. For the manual study, 40 “death groups”, 400 participants in “death groups”, 400 accounts with signs of depression and 400 accounts with an expressed desire to commit suicide were analyzed. Methods of analysis included open data source analysis; analysis of statistical data; methods of mathematical statistics. The obtained data formed the basis for the development of a linguistic model for automated monitoring of social media. For the automated part, the system of automatic monitoring of social media was applied. At-risk accounts were identified based on linguistic markers that showed signs of risk of suicidal behavior. It was established that “death groups” account only for 1% of the total number of accounts with suicidal behavior, and, therefore, cannot serve as a guide for detecting at-risk accounts. Suicidal markers in social media reveal the need for self-expression and draw attention to one's personality.

Keywords: Social mediadeath groupsRussian teenage and youthsubculturesuicide culturesuicidal games

Introduction

According to the International Health Organisation (WHO, 2017), more than 800, 000 persons commit suicide each year, that is, one person every 40 seconds. Suicides are the second leading cause of death among young people of 15-29 age group.

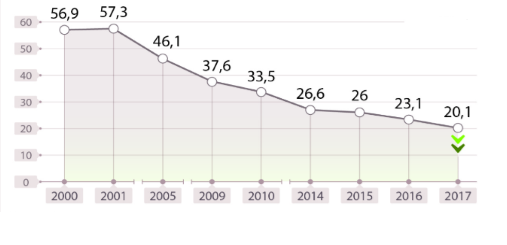

Suicides in the Russian Federation are an important social problem of national scale. The level of suicides remains among the highest in the world despite the fact that the number of suicides in Russia has been rapidly decreasing since 2001 (See Figure

As of December 2017, the number of suicides in Russia was 15,800 per 100,000. The majority of persons committing suicides in Russia are able-bodied men. Besides, 1,500 children commit suicides and another 4,000 commit suicidal attempts. According to the UNICEF (2018), 45% of Russian girls and 27% of Russian boys at least once in their lifetime contemplated the possibility of a suicide.

As of the beginning of 2017, Russia held 30th place in the world in the number of suicides.

Number of suicides (‘000)

The impact of the Internet on human psychic, cognitive and behavioral qualities. Such attitudes are related to the emergence of “death group” culture in Russia between 2016 and 2018, which has been actively spreading especially in social media.

Groups devoted to death and suicidal behavior appeared in Russian social media at the time of the rise of the popularity of the Runet (beginning of 2000’s). First, they existed as blogs (then diaries) and, mainly, contained little original material. Users posted same articles and lists of ways how to commit a suicide.

In 2003-2005, on the basis of the Live Journal blog hosting, a “suicidal hangout” was active, consisting of a number of groups united by a common theme. Themes of community interest revolved around suicide, death, depression, psychiatry, psychotropic drugs and so on. Members of the community were both real suicides, and just interested but not going to commit suicide.

The suicide topic became very popular in the mid-2000s, when the emo subculture was popularized in Russia. Emo advocated victimized, auto-aggressive and suicidal behavior. A large number of groups were created in social media, as well as blogs and articles appeared, related to the value of mental and physical suffering. Emo followers cut themselves, performed demonstrative suicidal attempts. The music, video, literature of that subculture openly propagated suicide and instilled in its followers’ thoughts the meaninglessness of life and the need for death. There are no official suicide statistics among emo teenagers, but this subculture was cited as one of the causes of suicide in the 2000s.

The theme of suicides in the emo subculture gave rise to mass panic and reached the Government of the Russian Federation. On June 2, 2008, the State Duma of Russia held parliamentary hearings at which the “Concept of state policy in the area of spiritual and ethical education of children in the Russian Federation and the protection of their morals” was discussed. The intention to fight against the spread of emo was declared because of the child suicide propaganda in it. However, the draft law was criticized.

By 2012, the emo subculture lost its popularity. Most followers left the community, the influx of new followers decreased hugely.

In the period from 2012 to 2015, the problem of suicide was not popular, although it still remained there. In view of the poor laws governing the activities of social media, the public domain contained shock-content, such as murder, suicide, autopsy, animal abuse, etc.

November 23, 2015, the suicide of Rina Palenkova, who threw herself under the train, marked the beginning of the case for death groups. There is a hypothesis that the girl was driven to suicide by the curator of one of the groups in the social network (f57, sea of whales).

On December 25, 2015, two schoolgirls from Ryazan committed suicide, allegedly under the influence of death groups too.

March 10, users of social media began to spread false information that on March 12 mass suicides of children should begin across Russia. The event did not happen, the reliability of information on the actual planning of mass suicide was not confirmed.

May 16, 2016 Galina Mursalieva’s article “Death Group” was published in the

November 15, 2016 Philipp Budeikin was arrested, also known as Philipp Lis, who was the creator of the group f57. Philip confessed leading 15 persons to suicide, as well as convincing another 28 people to commit suicide.

In February 2017, information about death groups was widely circulated on the Internet and searchable by hashtags: wake me up at 4.20, quiet house, blue whale, sea of whales, etc.

In April 2017, State Duma Vice Speaker Irina Yarovaya proposed a new bill on pushing minors to suicide with punishment terms of up to 8 years of imprisonment, for inducing adolescents to commit suicide - up to 6 years in prison, for organizing various kinds of “games” related to the threat to life. The bill was supported and adopted by the State Duma.

June 8, 2017 in Moscow, the organizer of one of the death groups was arrested, who pushed 32 minors to suicide. One of his victims was hospitalized with poisoning.

In early December 2017, a new suicidal game “red owl” started spreading in the Russian social media, but it didn’t receive any response, therefore, its activity ceased.

In May 2018, there were signs of revival of suicidal games “u19” and “a new way”. Despite the massive circulation of information about the groups by the administrators themselves, the revived suicidal games did not spread.

Problem Statement

The urgency of the problem of suicidal behavior propagation in social media spurs interest among Internet users, citizens of Russia and representatives of the Russian government, and forms the need for thorough consideration of the issue. At the moment there is no detailed comprehensive study in this area.

In this paper, an attempt is made to investigate the spread of suicidal behavior in social media, the spread of the culture of “death groups” in social media, and to create a methodology for identifying signs of suicidal behavior in social media.

Also, in this paper, an attempt is made to structure the data and indicators pertaining to the problem, as well as to conduct their qualitative analysis.

Research Questions

-

Are there any signs of suicidal behavior in social media?

-

What signs indicate the risk of suicidal behavior in social media?

-

What are the “death groups” in social media and what are their characteristic features?

-

The prevalence of suicidal behavior in social media;

-

Ratio of the number of participant accounts in “death groups” to the number of accounts showing signs of suicidal behavior, but not participating in “death groups”.

Purpose of the Study

Studying the suicidal behavior markers in communicative behaviour in social media.

Research Methods

Conceptual framework

Theoretical and methodological premises: ICD-10 (international classification of diseases of the 10th revision); fundamental suicidology (Efremov, 2004; Wojciech, 2008; Starshenbaum, 2005); the “Signal” method Imaton (“Signal” Imaton, 2018); a questionnaire of suicidal risk (Razuvaeva, 2014); method “suicide risk map” (Schneider, 2018); the Buss-Durkee questionnaire (Buss 1957).

The main concepts of the work are suicidal behavior, suicide, “death groups”.

suicidal predisposition: manifested by such personality traits as anxiety-depressive radical, impulsiveness, interpersonal dependence, frustration of needs with the desire to eliminate the frustration;

latent pre-suicide: a period of time when the individual is in a state of socio-psychological and mental de-adaptation and at the same time is in a “motivational readiness” for suicidogenesis, while there are no signs of suicidal activity. It is characterized by insignificant changes in behavior (increased anxiety, mood changes, impaired communication, antisocial behavior, alcoholic excesses), suicidal fantasies, periodic suicidal thoughts;

manifest pre-suicide: manifested by constant suicidal thoughts, suicidal remarks; drastic changes in behavior, lifestyle; various forms of self-destructive behavior;

Acute pre-suicide (the state of psychological crisis, a direct threat of suicidal actions): manifested by the valuation of suicidal thoughts, suicidal tendencies; purposeful search for means of carrying out suicidal actions; direct or indirect “farewells” with loved ones; inadequate forms of behavior (“evil prophetic” tranquility, ideational psychomotor disinhibition, agitation);

suicidal actions (suicide attempt or suicide).

Participants and study protocols

The research was conducted from January 2016 to February 2018 on all accounts of the social media Vkontakte, Facebook, Twitter, Instagram, Ask.fm, Ответы.mail, video hosting Youtube, Russian-language blogs and forums.

The study was divided into two parts: automated and manual.

For the manual study, 40 “death groups”, 400 participants in “death groups”, 400 accounts with signs of depression and 400 accounts with an expressed desire to commit suicide were analyzed. The analysis of accounts was carried out by the following methods: analysis of open data sources (Content Analysis); analysis of statistical data; methods of mathematical statistics. The obtained data formed the basis for the development of a linguistic model for automated monitoring of social media.

For the automated part, the system of automatic monitoring of social media was applied, which identified at-risk accounts out of the total number of Russian social media accounts based on linguistic markers that showed signs of risk of suicidal behavior.

The criteria for selecting subjects were: the age and place of residence indicated in the account, Russian as the language of communication, the active status in social media.

The criteria for including accounts in the list of accounts with an expressed desire to commit suicide were as follows:

Direct expression of fantasies, desires and plans for committing suicide;

Membership in groups dedicated to suicidal culture.

The criteria for including accounts in the list of accounts with signs of depression:

Direct indication of the presence of depression in the account;

Membership in groups dedicated to depression.

The criteria for including accounts in the list of “death groups”:

Direct and indirect declination of participants to suicide;

Conducting a suicidal game with certain rules, where the result should be the death of the participants.

The criteria for including accounts in the list of accounts of the followers of the “death groups” were:

Membership in “death groups”;

Participation in a suicidal game.

In the first part of the study, the following variables were analyzed:

Account activity time and dynamics;

Nickname and avatar of the account;

Filling in personal information in the account;

Account surroundings (friends, followers, commentators);

The interests of the account (the groups to which it is subscribed, the groups to which the account makes reposts);

Content of the list of video records of the account;

Content of the list of audio records of the account;

Prevailing topics of messages;

Prevailing modality of messages;

Prevailing message pattern;

Used slang;

Other linguistic features of texts (use of signs, use of smiles, etc.);

The nature of communication with other users of the network.

In the second part of the study, the following variables were analyzed:

The dynamics of the spread of suicidal behavior in social media;

Peaks of suicidal behavior in social media;

Geography of the spread of suicidal behavior in social media;

Sources of suicidal behavior spread in social media;

Behavioral features of the spread of suicidal behavior in social media.

Findings

At-risk signs of suicidal behavior in social media.

According to the results of the study of a series of accounts with signs of depression and suicidal behavior, the following behavioral and linguistic signs were established:

The main activity time is morning and night hours. The vast majority of accounts make a maximum of posts in the period from 22.00 to 01.00 hours, as well as from 03.00 to 08.00 hours;

The predominant number of accounts' nicknames are written in a foreign language or are transliteration of foreign names. Preference is given to the characters of books, serials and comics. As an avatar, the overwhelming majority uses three types of pictures: 1 - anime-pictures and pictures with drawn characters of games and comics; 2 - darkened images with a superhuman silhouette, as well as darkened images with bloody stains; 3 - own image in the Gothic style, or with a hidden face (embedded with a smiley face, retouched, wearing a mask, etc.);

The prevailing number of accounts prefer not to fill in personal information. Most accounts fill only a few fields: the city of residence, contacts, marital status. In the city of residence, the cities of the USA and the cities of Europe are indicated. Contacts indicate links to other social media of the user (most often Instagram);

For the vast majority of accounts, more than 30% of their environment shows signs of depression and suicidal behavior. It is established that accounts with signs of suicidal behavior are dominated by extreme values for the number of friends, which is either less than 40 or more than 350;

In the prevailing number of accounts, more than 70% of interest groups are groups dedicated to depression, suicidal behavior, psychology, loneliness, death/physical and mental suffering, afterlife, sects and esoteric currents;

In the list of video recordings of the prevailing number of accounts, videos containing pictures of physical and psychological violence, videos of depressive content, videos with shocking content, as well as fan videos (music groups, anime, video games, fan videos) were found;

Audio recordings in rap and rock styles are found in the list of audio recordings of the prevailing number of accounts. Among the rap, Lil Pip stands out, and among the rock - Suicide Boys.

Prevailing topics are: demonstrative behavior, personal information, abstract topics; The predominant thematic focus: social risks, ritual services, oncology, eating disorders, medicines, body modifications.

Prevailing behavior patterns are: demonstrative behavior, aggression and auto-aggression; expressed in the behavior are suicidal thoughts, devaluation of life, farewell to relatives, forgiveness of offenders, suicidal actions.

The predominant modality of messages is negative (65%) or neutral (25%).

The slang of the group with suicidal behavior in social media was identified (See Appendix 1).

The linguistic features of the group with suicidal behavior in social media have been established (See Appendix 1).

Among other linguistic features of the texts standing out are: the use of emojis of the skull, black heart, withered rose.

It is established that accounts with signs of suicidal behavior in most cases remove posts with depressive and suicidal symptoms, and prefer depressive pictures.

Accounts with signs of depression and suicidal behavior make more than 70% of publications related to the theme of death, including violent death.

More than 30% of accounts with signs of suicidal behavior lay out personal photos with acts of auto-aggression. 95% of the accounts that upload personal photos with auto-aggression acts have other listed signs of suicidal behavior.

Suicidal behavior in social media

Suicidal behavior in social media can be identified by the following symptoms:

Reflections on the lack of value of life, denial of life;

Undifferentiated thoughts;

Suicidal thoughts and fantasies (it would be good to die; it would be good to get sick);

A conscious desire to die;

Demonstrative behavior (the publication of photos and video materials containing episodes of death, suicide, physical injury);

Publication of information on suicides;

Strong negative emotions (in particular, resentment and anger);

Depression;

Elaborating a suicide plan;

Farewell to relatives, instructions regarding their death and burial, will.

“Death groups” in social media

Currently, the total number of followers of active death groups is 1,109 accounts (excluding intersections). The total number of followers of active groups, groups under development, groups of inactive but possibly functioning accounts constitute 1,532 accounts (excluding intersections).

“Death Groups” always have a certain algorithm of action, to enter a suicidal game. “Death Groups” have the following characteristics:

Firstly, they are closely related to the movement of net-stalking, which indicates that administrators and supervisors can use methods to detect data about their followers (for example, for blackmail);

Secondly, they are associated with pseudo-mystical cults of social media, which also have a destructive effect;

Thirdly, the “death groups” are closely connected with the groups dedicated to school-shooting, violence, Satanism;

Fourthly, all death groups that are active at the moment have direct and indirect links among themselves.

Fifthly, they use a set of hashtags: “quiet house”, “blue whale”, “wake me up at 4.20”, “the owl does not sleep,” “the owl never sleeps,” “the red owl,” “waiting for you 12 days “,” let's fly together “,” #1:36“, #deletedsky_1281, #dk_1281, #h13, #h33, #heleim13, #ins1ight3, #istok, #l13, #number998”, #number999, #t98, #u19, #u29, #ourtimeisover_it’sclose, #thetruthisnear, and so on.

Sixthly, the groups have certain rules for joining and membership;

Seventhly, groups have their own symbols and their own slang.

Rules of joining the game, hashtags and symbols.

To enter the game, you need to:

Publish group hashtags;

Put the symbol of the group on the avatar;

“Like” the post announcing the beginning of the game in the group.

There are also informal ways to enter the game:

Personally write to the group creator, curator or distributor;

Leave a comment on the page of the creator of the group, curator or distributor with an expression of desire to enter the game;

Write in the group (in comments or newsfeed of the group) with an expression of desire to enter the game;

Write on your page a message expressing the desire to join the game with hashtags or without;

Subscribe to the death groups.

It is important to note that the working groups mutate, and some are still under development. This means that groups can enter new hashtags, symbols and rules, and also set their goal not just to take their own lives, but commit more terrible crimes. This probability is very high, given that the death groups are in close connection with school-shooting, pseudo-mystical cults, net-stalking, violence and Satanism. It is also worrying that many members of the death groups came to them from groups dedicated to school-shooting, serial killers, A.U.E. movement, Nazism, Satanism (See Figure

Prevalence of suicidal behavior in social media.

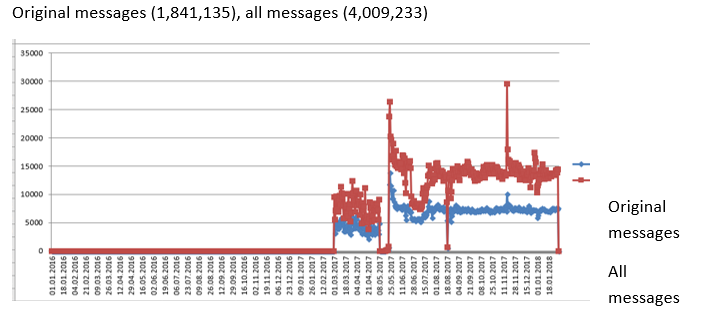

Analyzing the flow of information in social media by an automatic monitoring system for social media, by key words from sets of trigger words, we obtained data on the characteristics of the spread of suicidal behavior in social media.

For the period from January 2016 to February 2018, 4,092,233 messages were published by 2,529,284 authors (without intersections).

The peaks of the theme propagation fall on February 2017, May 2017, November 2017, January 2018 (See Figures

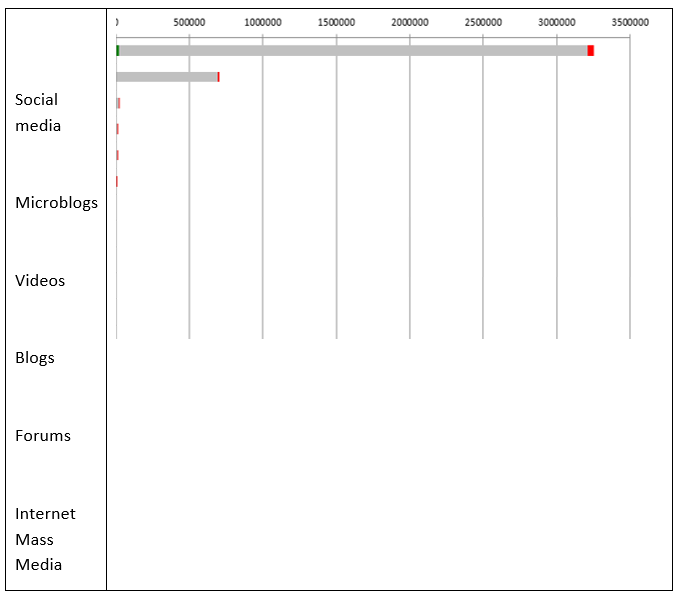

The main sites propagating the theme are social media and microblogs.

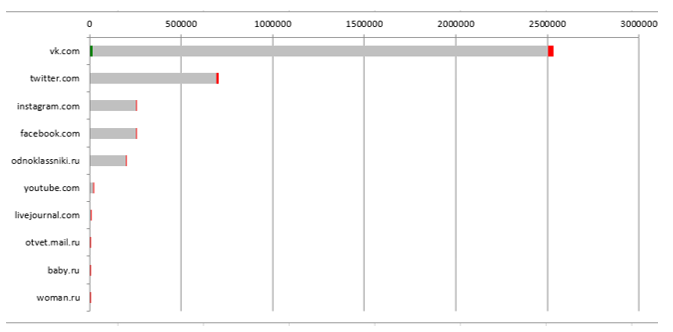

The theme is mainly promoted through social media: Vkontakte, Twitter, Instagram, Facebook, Odnoklassniki.

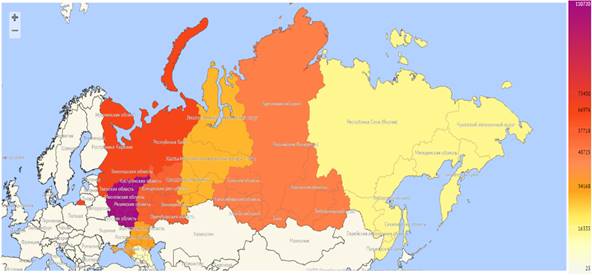

It is established that the theme is mostly prevalent in the following regions of Russia: Central Russia (Moscow and the region, Belgorod Region, Voronezh Region), St. Petersburg, Krasnodar Territory (See Figure

The ratio of the number of accounts of “death group” participants to the number of accounts showing at-risk suicidal behavior, which are not members of “death groups”.

The escalation of the problem of suicidal groups on the Internet accounted for the focus of attention to the problem of suicidal behavior propagation in social media.

The true state of affairs is such that narrow groups “for the chosen few” exist everywhere, united not only by the theme of suicide, but also by themes of murders, necrophilia, Satanism, perversions, etc. These groups are not searchable in the lists of groups or by keywords. These groups can be found only by those who are really interested in this. The number of people in these groups varies from 1 thousand to 1.5 million. The content of such groups causes mental trauma, can aggravate mental disorders or cause behavioral disorders.

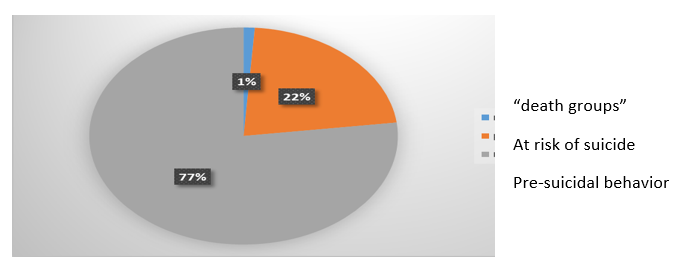

It has been established that the number of accounts involved in death groups account only for 1% of the total amount of suicidal behavior in social media.

It has been established that only 3% of accounts engaged in “death groups” demonstrate suicidal behavior. (See Figure

Conclusion

Suicidal behavior in social media is a topical problem of our time. There are markers for identifying signs of suicidal behavior in communicative behavior in social media.

It has been established that “death groups” as the main reason for suicidal behavior in social media account only for 1% from the total number of accounts with suicidal behavior, and, therefore, cannot serve as a guide for the detection of accounts with suicidal behavior.

The markers of suicidal behavior in social media are behavioral and linguistic features reflected in the public activity of the account.

It has been established that the overwhelming majority of the activity of accounts with signs of suicidal behavior is of demonstrative nature and serves for self-expression and drawing attention to one's personality.

References

- Ambrumova, A.G. & Tikhonenko, V.A. (1980). Suicidal behavior diagnostics. Moscow, Russia: Ministry of Healthcare of the Russian Federation, Moscow Scientific Research Institute of Psychiatry.

- WHO (2017) World Health Organization. Retrieved from http://www.who.int/ru

- Wojciech V. (2008). Clinical suicidology. Moscow, Russia: Miklosh.

- Razuvaeva, T.N. (2014) Suicidal Risk Questionnaire. Retrieved from http://www.all-tests.ru/test/oprosnik-suicidalnogo-riska-tn-razuvaevoy

- Schneider, L.B. (2018) Diagnostics of suicidal behavior. Retrieved from http://xn--j1akam.xn--p1ai/psix_kabinet/diagnostika_suicidalnogo_riska.pdf

- Buss, A. H. & Durkee, A. (1957). Buss-Durkee Inventory. Retrieved from http://testoteka.narod.ru/lichn/1/37.html

- Mursalieva, G. (2016). Death groups. Retrieved from https://www.novayagazeta.ru/articles/2016/05/16/68604-gruppy-smerti-18

- Efremov, V.S. (2004). Fundamentals of Suicidology. Moscow, Russia: Dialect.

- Rosstat (2018) Retrieved from http://www.gks.ru/

- Starshenbaum, V.G. (2005). Suicidology and crisis psychotherapy. Moscow, Russia: Kogito-Centre

- UNICEF (2018). Retrieved from https://www.unicef.org/eca/ru/

- “Signal” Imaton (2018). Computer app for suicidal risk assessment. Retrieved from http://www.imaton.com/metodiki/met/34/

Copyright information

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

About this article

Publication Date

23 November 2018

Article Doi

eBook ISBN

978-1-80296-048-8

Publisher

Future Academy

Volume

49

Print ISBN (optional)

-

Edition Number

1st Edition

Pages

1-840

Subjects

Educational psychology, child psychology, developmental psychology, cognitive psychology

Cite this article as:

Efremova, G. I., Nikishina, V. B., Samosvat, O. I., & Carroll, V. (2018). Suicidal Behavior: Communicative Markers In Social Media. In S. Malykh, & E. Nikulchev (Eds.), Psychology and Education - ICPE 2018, vol 49. European Proceedings of Social and Behavioural Sciences (pp. 209-221). Future Academy. https://doi.org/10.15405/epsbs.2018.11.02.23